Composition with Semantically Rich Names

In my previous post, I remarked on the fact that composition, while central to our work as engineers, is often poorly supported by the tools we use. In this post I want to explore a specific trend away from this state of affairs, where composition is given the first-class treatment it deserves.

In my previous post, I remarked on the fact that composition, while central to our work as engineers, is often poorly supported by the tools we use. In this post I want to explore a specific trend away from this state of affairs, where composition is given the first-class treatment it deserves.

When engineering with atoms, composing components often consists of literally putting them together: you take piece A, move it close to piece B, and combine them by inserting tab X into slot Y. Sometimes an analogous case occurs in software: developers may instantiate instances of two particular design patterns in a class definition and compose them by ensuring the overlap meets both requirements, or copy in a template or project scaffold to compose a general structure with their specific use case.

Most of the time, however, software engineers compose by reference, making one part of the system conform to the interface of another and indirectly interact with the component exposing the interface through some pointer, handle, or name. These layers of indirection allow for more efficient reuse of components, clearer conceptual boundaries, and more specialized and dynamic composition. This benefits both the humans using the tools and the automated systems operating behind the scenes, at various time scales. Composition by named reference is so fundamental that one of the two foundational models of computation revolves exclusively around referencing by name and resolving those references!

Programming and networking

Traditionally, most sophisticated name-based composition acted at the levels of building programs or of connecting entire machines.

Composition by name is ubiquitous in programming. We reference packages we want to use by a name and a version. We identify libraries we depend on by name. We call functions, consume data, implement interfaces, etc., all via their names. Then our tools take over: Our editors provide us documentation by name, our compilers look up type information and calling conventions by name, our static and dynamic linkers connect needed functionality across binary components by name.

Any user of post-Internet computers is intimately familiar with our industry’s other venerable usage of names: finding another machine on the network. Every time you type a URL in your search bar, click a link, or configure an application to point to some specific service, you are composing computer resources by name. The most systematized and sophisticated aspect of these names is the domain name system, which gives you a high quality way to connect a top level name to a specific machine. Most of the rest of the URL is particular to each individual system, with at best weak conventions governing their use and meaning. But for both human and machine consumers, the domain name contains very precise semantics for composition at the machine level.

These names are not only crucial operationally, to put everything together in the system, they are also crucial cognitively, to put everything together in our minds. It’s a clichéd joke that naming something is one of the two hardest problems in computer science, but that joke is based on the very serious fact that a name lets us treat a complex system as a single unit, and a good name helps us do that effectively.

DNS and programming language symbol resolution are at this point completely entrenched. They’ve been around in the relevant forms for the entire productive career of a huge portion of our industry. In more recent decades, however, some new approaches to name-based composition in new domains have emerged.

Semantically-meaningful resource naming

After the previous section, some of you may be wondering: What about the filesystem? It’s true that files and other system resources have long been named by strings, with some weak structure for navigating trees of resources (i.e., the directory separator) and, on some systems, the type of file being named (the file extension). Similarly, other resources have long been referenced by name: https://google.com names not the server at google.com, but the specific HTTPS service exposed by that server over TCP port 443.

The problem with these and other naming systems is that they put too much of the work of composition onto the users. With a few minor exceptions like the filesystem hierarchy standard and file extensions, file paths tell you almost nothing about the file in question that you didn’t already know. Almost no one looks at the full URL of a hyperlink and extracts meaningful information from it that wasn’t present in the context of the link. This results in the status quo: these names are almost always treated as fully opaque identifiers, and very few common naming rules apply across teams and systems.

Some systems have taken steps to move beyond this situation, however. In this section I will briefly cover a few novel tools that in some cases have succeeded in and in others promise to provide semantically meaningful names to compose different computational resources.

git

I assume most of you are familiar with git, at a high level. At a primitive level, git operates on various types of objects, such as blobs, commits, or symlinks. At the human interface level, many of these objects are ultimately referenced by ad hoc unstructured names, such as “file x/y/z on branch foo”. That being said, you’ve probably seen commits referenced by a hash, such as 4149457c6358f702fffbefcc2b7f6e8a87f802fb. Hashes like this are what the human-readable names are translated into, and how all of the objects internally refer to each other.

What do the hashes mean? Each object can be serialized, i.e. written to a file such that it can be correctly reconstructed later, including references to any other objects it depends on. These serializations are canonical, such that any two objects that are the same will have byte for byte the same serialization. Using a cryptographic hash function on the contents of these serialized files then allows the git tools to give each object a short name that captures the full information content of the object, modulo unlikely hash collisions. We therefore call these content-addressed names.

Why should we use content-addressed names? In short, because they allow different components of the system to cheaply refer to each other with a high degree of reliability: If some commit object says that its associated filesystem tree has some file at a particular path, I know that whenever I refer to that file via relative references from the commit, I will get a file with exactly the same contents.

This allows for safe deduplication of files across commits and efficient communication protocols for distributing repositories across the network. It also gives a much more precise meaning to the names in question: “the file at x/y/z” can refer, at different times and on different systems, to any kind of file the relevant filesystem supports, whereas “the file at x/y/z in the tree of commit 4149457c6358f702fffbefcc2b7f6e8a87f802fb” refers, for most intents and purposes, to exactly one file with specific contents.

Nix

Nix is a package manager for Unix systems. It is built on several innovations, but the one relevant for this post is the Nix store, where the filesystem resources associated with packages are managed.

Like git, Nix manages some of its filesystem resources by content-addressed name, using essentially the same logic as git’s hash-based content addressing. By themselves, however, such names are inadequate to Nix’s goal of highly reliable and accurate dependency management with composition across packages. If I’ve built package foo 1.0 against some specific content-addressed instance of package bar 2.0, I can calculate the content and thus a content-addressed name of the resulting package. But if you want to build foo 1.0 against the newly released bar 2.1, you can’t reuse anything in that content addressed name and must rebuild from scratch.

Nix addresses this issue with what I call recipe-addressed names: Some filesystem resources are named not by their own content but by the content of a serialization of a deterministic build recipe for creating it. The nature of these build recipes is such that it is much easier to substitute in different versions, configurations, etc. anywhere in the dependency tree and recalculate what the new name of the results should be.

With content-addressed names for bootstrapping, and recipe-addressed names for building up more complex packages, Nix opens up a whole new world of package management. Nix can ensure that many different machines, however different their configurations, have exactly the same package installed.

Nix can safely reuse common dependencies between packages, parallelize builds, and distribute builds across multiple machines. Large centralized binary caches are possible with a decentralized trust mechanism built on top. On top of these capabilities, entire system configurations can be deterministically captured in a single name, allowing reproducible deployments and much more reliable migration of personal systems across computers.

Nelson

Nelson is a “cloud-native” service orchestration framework built to take advantage of modern distribution, resource management, and coordination mechanisms. Among other things, Nelson enables deploying an immutable infrastructure-style network topology while retaining efficient garbage collection of unneeded services. Nelson achieves this by requiring services to reference each other via name resolution mechanisms it populates from high level service configurations. Each service configuration specifies the other services it depends upon, providing both a service name, which has meaning to the user, and a semantic version, which contains information about cross-version compatibility. Nelson leverages the information in the dependency specification and keeps track of which services depend on which, allowing it to safely remove old instances of services that are not depended upon by any other service.

Unison

Unison is an alpha-level functional programming language primarily oriented around application and library development for distributed systems and web services. Its main innovation is to use content-addressed symbol names to refer to code. Rather than using the plain-text function or data definition written by the programmer, Unison hashes based on the abstract syntax tree after human-readable names are abstracted out. This allows a number of valuable features for Unison programs:

- New human-readable names can be associated with the same data at nearly zero cost, including allowing different users to use different names for the same functions.

- Precise dependency management (similar to Nix) is possible because functions and other data refer to each other by exact name

- The notion of “building your application” can subsumed by automated background processes because any evaluation can be precisely named and therefore safely automatically compiled and cached

- Computation and systems can be automatically distributed across arbitrary network topologies because exact differences in both code and previous evaluations can be identified.

What about Scarf?

From this sample of new composition technologies, we can induce a common principle: when we compose resources by name, and imbue those names with the right semantics, we can achieve massive benefits in terms of reproducibility, efficiency, and higher-order meaning. This insight raised an obvious question for me: What would a system based around that common principle look like? What benefits would it have? And what does this have to do with Scarf’s mission?

Keep an eye out for my next post to find out!

Beyond the Surface: How to Engage with the Quiet Members of your Open Source Community

In the dynamic realm of community management, marketing, and developer relations, success depends upon more than just attracting attention. It's about fostering meaningful relationships, nurturing engagement, and amplifying your community's impact.

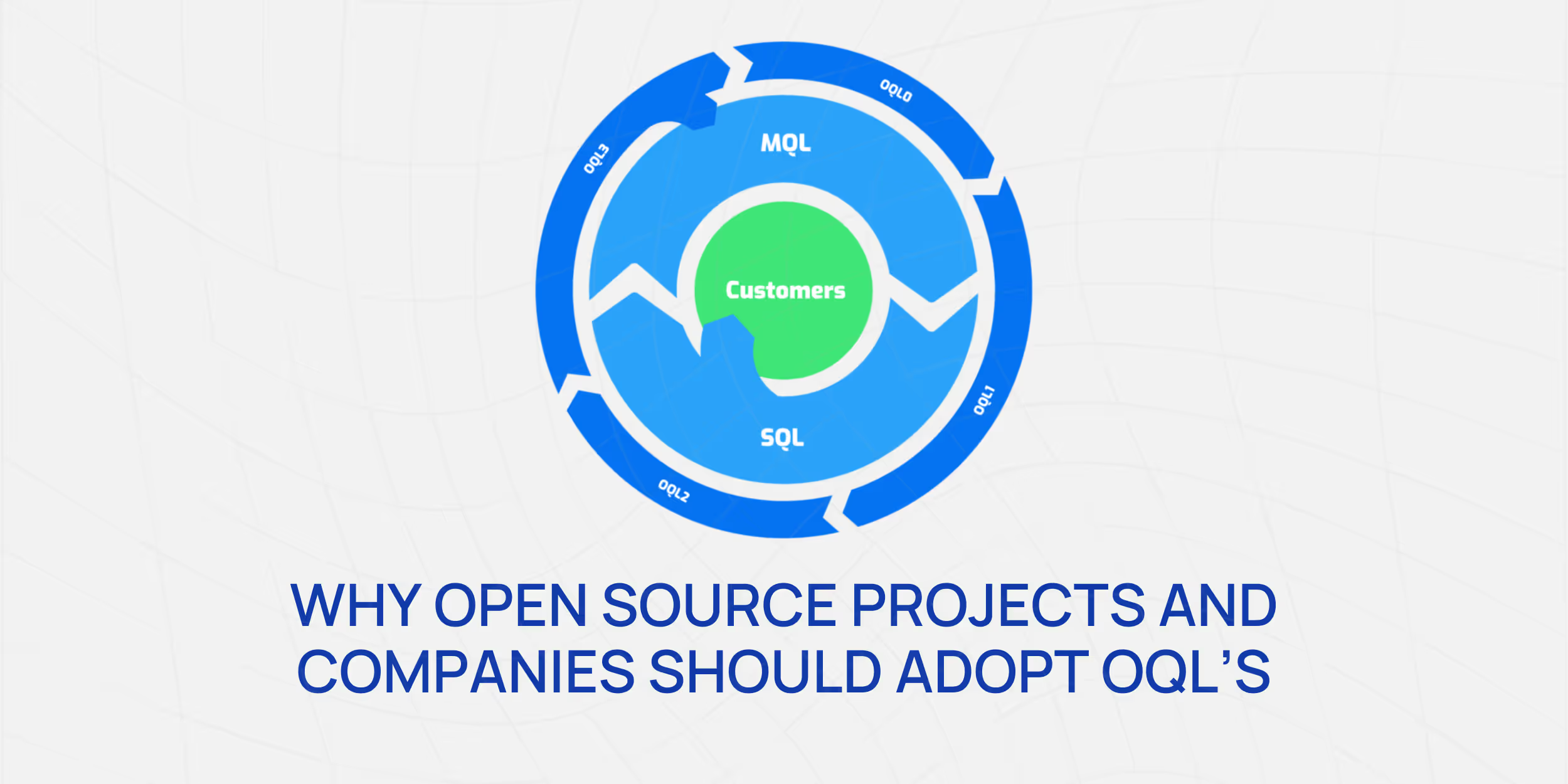

Why Open Source Projects and Companies Should Adopt "Open Source Qualified Leads"

The modern digital age, driven by the proliferation of open-source projects, is as much about community as it is about the code. This means understanding and engaging with potential adopters, contributors, and users becomes critical for the health and success of a project or product. Here enters the concept of "Open Source Qualified Leads" (OQL).

Mastering Telemetry in Open Source: A Simple Guide to Building Lightweight Call Home Functionality

Implementing a call-home functionality or telemetry within open-source software often raises privacy concerns within the community. Many parties, including enterprise security teams, customer advocates, and developers, express rightful apprehensions about the transmission, storage, and usage of data.